Note

Go to the end to download the full example code. or to run this example in your browser via Binder

ParEGO¶

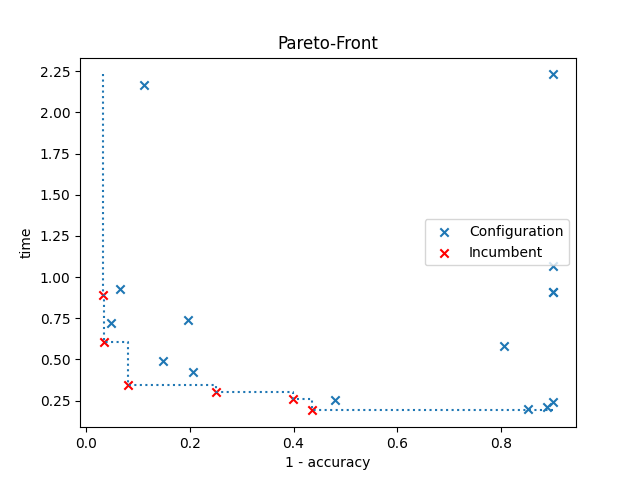

An example of how to use multi-objective optimization with ParEGO. Both accuracy and run-time are going to be optimized on the digits dataset using an MLP, and the configurations are shown in a plot, highlighting the best ones in a Pareto front. The red cross indicates the best configuration selected by SMAC.

In the optimization, SMAC evaluates the configurations on two different seeds. Therefore, the plot shows the mean accuracy and run-time of each configuration.

[WARNING][target_function_runner.py:74] The argument budget is not set by SMAC: Consider removing it from the target function.

[INFO][abstract_initial_design.py:147] Using 5 initial design configurations and 0 additional configurations.

[INFO][abstract_intensifier.py:515] Added config 3623cc as new incumbent because there are no incumbents yet.

[INFO][abstract_intensifier.py:602] Config 540db2 is a new incumbent. Total number of incumbents: 2.

[INFO][abstract_intensifier.py:602] Config 0c9159 is a new incumbent. Total number of incumbents: 3.

[INFO][abstract_intensifier.py:594] Added config 3b5efd and rejected config 540db2 as incumbent because it is not better than the incumbents on 2 instances:

[INFO][abstract_intensifier.py:594] Added config 680302 and rejected config 0c9159 as incumbent because it is not better than the incumbents on 2 instances:

[INFO][abstract_intensifier.py:602] Config 088e36 is a new incumbent. Total number of incumbents: 4.

[INFO][abstract_intensifier.py:602] Config dbd4ad is a new incumbent. Total number of incumbents: 5.

[INFO][abstract_intensifier.py:594] Added config f04f3e and rejected config 3623cc as incumbent because it is not better than the incumbents on 2 instances:

[INFO][abstract_intensifier.py:602] Config 04c2c7 is a new incumbent. Total number of incumbents: 6.

[INFO][abstract_intensifier.py:602] Config 6545ed is a new incumbent. Total number of incumbents: 7.

[INFO][smbo.py:327] Configuration budget is exhausted:

[INFO][smbo.py:328] --- Remaining wallclock time: -0.8852415084838867

[INFO][smbo.py:329] --- Remaining cpu time: inf

[INFO][smbo.py:330] --- Remaining trials: 158

Validated costs from default config:

--- [0.60155834 0.15334892]

Validated costs from the Pareto front (incumbents):

--- [0.03561281 0.60063148]

--- [0.08151114 0.3420825 ]

--- [0.03200015 0.87647736]

--- [0.39984834 0.25476861]

--- [0.25040777 0.30232453]

--- [0.43516945 0.18795562]

from __future__ import annotations

import time

import warnings

import matplotlib.pyplot as plt

import numpy as np

from ConfigSpace import (

Categorical,

Configuration,

ConfigurationSpace,

EqualsCondition,

Float,

InCondition,

Integer,

)

from sklearn.datasets import load_digits

from sklearn.model_selection import StratifiedKFold, cross_val_score

from sklearn.neural_network import MLPClassifier

from smac import HyperparameterOptimizationFacade as HPOFacade

from smac import Scenario

from smac.facade.abstract_facade import AbstractFacade

from smac.multi_objective.parego import ParEGO

__copyright__ = "Copyright 2021, AutoML.org Freiburg-Hannover"

__license__ = "3-clause BSD"

digits = load_digits()

class MLP:

@property

def configspace(self) -> ConfigurationSpace:

cs = ConfigurationSpace()

n_layer = Integer("n_layer", (1, 5), default=1)

n_neurons = Integer("n_neurons", (8, 256), log=True, default=10)

activation = Categorical("activation", ["logistic", "tanh", "relu"], default="tanh")

solver = Categorical("solver", ["lbfgs", "sgd", "adam"], default="adam")

batch_size = Integer("batch_size", (30, 300), default=200)

learning_rate = Categorical("learning_rate", ["constant", "invscaling", "adaptive"], default="constant")

learning_rate_init = Float("learning_rate_init", (0.0001, 1.0), default=0.001, log=True)

cs.add_hyperparameters([n_layer, n_neurons, activation, solver, batch_size, learning_rate, learning_rate_init])

use_lr = EqualsCondition(child=learning_rate, parent=solver, value="sgd")

use_lr_init = InCondition(child=learning_rate_init, parent=solver, values=["sgd", "adam"])

use_batch_size = InCondition(child=batch_size, parent=solver, values=["sgd", "adam"])

# We can also add multiple conditions on hyperparameters at once:

cs.add_conditions([use_lr, use_batch_size, use_lr_init])

return cs

def train(self, config: Configuration, seed: int = 0, budget: int = 10) -> dict[str, float]:

lr = config.get("learning_rate", "constant")

lr_init = config.get("learning_rate_init", 0.001)

batch_size = config.get("batch_size", 200)

start_time = time.time()

with warnings.catch_warnings():

warnings.filterwarnings("ignore")

classifier = MLPClassifier(

hidden_layer_sizes=[config["n_neurons"]] * config["n_layer"],

solver=config["solver"],

batch_size=batch_size,

activation=config["activation"],

learning_rate=lr,

learning_rate_init=lr_init,

max_iter=int(np.ceil(budget)),

random_state=seed,

)

# Returns the 5-fold cross validation accuracy

cv = StratifiedKFold(n_splits=5, random_state=seed, shuffle=True) # to make CV splits consistent

score = cross_val_score(classifier, digits.data, digits.target, cv=cv, error_score="raise")

return {

"1 - accuracy": 1 - np.mean(score),

"time": time.time() - start_time,

}

def plot_pareto(smac: AbstractFacade, incumbents: list[Configuration]) -> None:

"""Plots configurations from SMAC and highlights the best configurations in a Pareto front."""

average_costs = []

average_pareto_costs = []

for config in smac.runhistory.get_configs():

# Since we use multiple seeds, we have to average them to get only one cost value pair for each configuration

average_cost = smac.runhistory.average_cost(config)

if config in incumbents:

average_pareto_costs += [average_cost]

else:

average_costs += [average_cost]

# Let's work with a numpy array

costs = np.vstack(average_costs)

pareto_costs = np.vstack(average_pareto_costs)

pareto_costs = pareto_costs[pareto_costs[:, 0].argsort()] # Sort them

costs_x, costs_y = costs[:, 0], costs[:, 1]

pareto_costs_x, pareto_costs_y = pareto_costs[:, 0], pareto_costs[:, 1]

plt.scatter(costs_x, costs_y, marker="x", label="Configuration")

plt.scatter(pareto_costs_x, pareto_costs_y, marker="x", c="r", label="Incumbent")

plt.step(

[pareto_costs_x[0]] + pareto_costs_x.tolist() + [np.max(costs_x)], # We add bounds

[np.max(costs_y)] + pareto_costs_y.tolist() + [np.min(pareto_costs_y)], # We add bounds

where="post",

linestyle=":",

)

plt.title("Pareto-Front")

plt.xlabel(smac.scenario.objectives[0])

plt.ylabel(smac.scenario.objectives[1])

plt.legend()

plt.show()

if __name__ == "__main__":

mlp = MLP()

objectives = ["1 - accuracy", "time"]

# Define our environment variables

scenario = Scenario(

mlp.configspace,

objectives=objectives,

walltime_limit=30, # After 30 seconds, we stop the hyperparameter optimization

n_trials=200, # Evaluate max 200 different trials

n_workers=1,

)

# We want to run five random configurations before starting the optimization.

initial_design = HPOFacade.get_initial_design(scenario, n_configs=5)

multi_objective_algorithm = ParEGO(scenario)

intensifier = HPOFacade.get_intensifier(scenario, max_config_calls=2)

# Create our SMAC object and pass the scenario and the train method

smac = HPOFacade(

scenario,

mlp.train,

initial_design=initial_design,

multi_objective_algorithm=multi_objective_algorithm,

intensifier=intensifier,

overwrite=True,

)

# Let's optimize

incumbents = smac.optimize()

# Get cost of default configuration

default_cost = smac.validate(mlp.configspace.get_default_configuration())

print(f"Validated costs from default config: \n--- {default_cost}\n")

print("Validated costs from the Pareto front (incumbents):")

for incumbent in incumbents:

cost = smac.validate(incumbent)

print("---", cost)

# Let's plot a pareto front

plot_pareto(smac, incumbents)

Total running time of the script: (0 minutes 36.470 seconds)